|

|

|||||||||

| Software: RF Cascade Workbook | RF Symbols for Office | RF Symbols & Stencils for Visio | Espresso Workbook | ||||||||||

|

|||||||||||||||||||||||||||||||

|

|

||||||||||||||||||||||||||||||

|

Please Support RF Cafe by purchasing my ridiculously low-priced products, all of which I created. RF & Electronics Symbols for Visio RF & Electronics Symbols for Office RF & Electronics Stencils for Visio T-Shirts, Mugs, Cups, Ball Caps, Mouse Pads These Are Available for Free |

|||||||||||||||||||||||||||||||

Electronics & Technology Principles

- Alchemy

- Advanced Driver Assistance Systems (ADAS)

- AI Image Generators

- Audiophile

- Beta Decay

- Blocking Oscillator

- Bohr-Rutherford Atomic Model

- Cable Television (CATV)

- COBOL Language

- Combinational Logic

- Conventional Current Flow

- Coronal Hole

- Cosmic Microwave Background Radiation (CMB)

- Dark Energy

- Dark Matter

- Dellinger Effect

- Direct Conversion vs. Heterodyne

vs. Superheterodyne - Duality in Electricity and Magnetism

- Dual Tone Multiple Frequency (DTMF)

- Effective Isotropic Radiated Power (EIRP)

- Electric Charge

- Electron Current Flow

- Electric Vehicle Full Life Cycle Cost

- Free Neutron Decay

- Gauss's Law

- Golden Ratio | Golden Number

- Haas (Precedence) Effect

- Hall Effect

- Human Body Model (HBM)

- Hysteresis

- International Geophysical Year (IGY)

- Industrial, Scientific, and Medical (ISM) Bands

- Ionosphere

- Kirchhoff's Current Law

- Kirchhoff's Voltage Law

- Left-Hand Rule of Electricity

- Left-Hand Rule of Magnetism

- LoRa Standard

- Loran

- Magnetic Monopole

- Meteor Burst Communication

- Moore's Law

- Nomograph

- Occam's Razor (Ockham's Razor)

- Pay-TV

- Pi (π) Radians = 360°

- Pi (π) Calculation

- Proper Motion

- Radio Direction Finding (RDF)

- Relativity, General

- Relativity, Special

- Right-Hand Rule of Electricity

- Russian Duga OTH Radar

- Space-Based Cryptocurrency Data Mining

- Sequential Logic

- Sferics | Spherics

- Squeg | Squegging

- Superconductivity

- Superheterodyne Receivers

- Technophobe

- Tunguska Event, Siberia

- Twin Paradox

- VOR | VORTAC

- The War of the Currents | The Battle of the Currents

- War Production Board (War Powers Act)

- WiLo (long range WIFi)

- Wireless Transmission

- X-Ray Experiments by Thomas Edison

- Y2K (Millennium Bug)

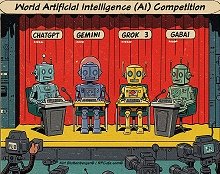

This content was generated by primarily

with the assistance of ChatGPT (OpenAI), and/or

Gemini (Google), and/or

Arya (GabAI), and/or Grok

(x.AI), and/or DeepSeek artificial intelligence

(AI) engines. Review was performed to help detect and correct any inaccuracies; however,

you are encouraged to verify the information yourself if it will be used for critical

applications. In all cases, multiple solicitations to the AI engine(s) was(were)

used to assimilate final content. Images and external hyperlinks have also been

added occasionally - especially on extensive treatises. Courts have ruled that AI-generated

content is not subject to copyright restrictions, but since I modify them, everything

here is protected by RF Cafe copyright. Many of the images are likewise generated

and modified. Your use of this data implies an agreement to hold totally harmless

Kirt Blattenberger, RF Cafe, and any and all of its assigns. Thank you. Here is

Gab AI in an iFrame.

This content was generated by primarily

with the assistance of ChatGPT (OpenAI), and/or

Gemini (Google), and/or

Arya (GabAI), and/or Grok

(x.AI), and/or DeepSeek artificial intelligence

(AI) engines. Review was performed to help detect and correct any inaccuracies; however,

you are encouraged to verify the information yourself if it will be used for critical

applications. In all cases, multiple solicitations to the AI engine(s) was(were)

used to assimilate final content. Images and external hyperlinks have also been

added occasionally - especially on extensive treatises. Courts have ruled that AI-generated

content is not subject to copyright restrictions, but since I modify them, everything

here is protected by RF Cafe copyright. Many of the images are likewise generated

and modified. Your use of this data implies an agreement to hold totally harmless

Kirt Blattenberger, RF Cafe, and any and all of its assigns. Thank you. Here is

Gab AI in an iFrame.

AI Technical Trustability Update

While working on an update to my RF Cafe Espresso Engineering Workbook project to add a couple calculators about FM sidebands (available soon). The good news is that AI provided excellent VBA code to generate a set of Bessel function plots. The bad news is when I asked for a table showing at which modulation indices sidebands 0 (carrier) through 5 vanish, none of the agents got it right. Some were really bad. The AI agents typically explain their reason and method correctly, then go on to produces bad results. Even after pointing out errors, subsequent results are still wrong. I do a lot of AI work and see this often, even with subscribing to professional versions. I ultimately generated the table myself. There is going to be a lot of inaccurate information out there based on unverified AI queries, so beware.

Electronics & High Tech Companies | Electronics & Tech Publications | Electronics & Tech Pioneers | Electronics & Tech Principles | Tech Standards Groups & Industry Associations | Societal Influences on Technology

Copyright: 1996 - 2026 |

About RF Cafe RF Cafe began life in 1996 as "RF Tools" in an AOL screen name web space totaling 2 MB. Its primary purpose was to provide me with ready access to commonly needed formulas and reference material while performing my work as an RF system and circuit design engineer. The World Wide Web (Internet) was largely an unknown entity at the time and bandwidth was a scarce commodity. Dial-up modems blazed along at 14.4 kbps while tying up your telephone line, and a lady's voice announced "You've Got Mail" when a new message arrived... |

Copyright 1996 - 2026 All trademarks, copyrights, patents, and other rights of ownership to images

and text used on the RF Cafe website are hereby acknowledge My Hobby Website: My Daughter's Website: |